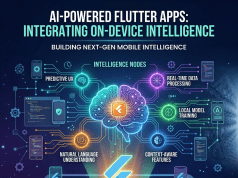

Mobile applications are changing faster than most businesses anticipated.

For years, apps were designed around taps, menus, forms, and predefined workflows. But in 2026, users increasingly expect applications to behave more like intelligent assistants than traditional software interfaces.

This shift is one of the biggest reasons businesses are integrating large language models (LLMs) into Flutter mobile applications.

LLMs are enabling mobile apps to understand natural language, generate contextual responses, summarize information, automate workflows, and simplify user interactions in ways that were difficult to achieve only a few years ago.

At the same time, Flutter has become one of the most widely adopted frameworks for cross-platform mobile development because of its speed, UI flexibility, and scalable architecture.

The combination of Flutter and LLMs is now helping businesses build AI-native mobile experiences much faster across iOS and Android without maintaining separate development ecosystems.

But many companies are also discovering that integrating LLMs into mobile apps involves far more than adding a chatbot interface.

The real challenge is designing useful AI experiences that reduce friction instead of creating more complexity.

Mobile Users Are Moving Toward Conversational Interactions

One of the biggest shifts happening across mobile products is the move from navigation-heavy interfaces toward conversational experiences.

Traditional mobile apps require users to manually search, filter, navigate menus, and complete repetitive actions step-by-step. As apps become more feature-heavy, this often creates operational fatigue for users.

LLMs are changing that interaction model completely.

Instead of navigating through multiple screens, users can now communicate intent directly through natural language.

For example:

- “Summarize my recent expenses.”

- “Find delayed project tasks.”

- “Recommend products based on my activity.”

- “Generate a meeting summary.”

- “Create a workout plan for this week.”

The app handles the complexity behind the scenes.

This transition matters because modern users increasingly value speed and simplicity more than interface depth. They want outcomes faster, especially on mobile devices where attention spans are shorter and interactions happen more frequently throughout the day.

Flutter applications are particularly well-positioned for this shift because Flutter allows teams to create highly responsive UI systems while integrating cloud-based AI services, APIs, and conversational workflows efficiently.

Companies like OpenAI, Google Gemini, and Anthropic are accelerating enterprise adoption of LLM infrastructure, while development firms such as GeekyAnts and other Flutter-focused engineering teams are helping businesses integrate these AI capabilities into scalable mobile ecosystems.

The result is a growing shift toward mobile experiences designed around intent rather than navigation.

LLMs Are Expanding What Flutter Apps Can Do

One reason LLM integration is growing rapidly is because businesses are discovering that conversational AI can solve multiple operational problems simultaneously.

Instead of building separate systems for search, recommendations, automation, support, and onboarding, businesses can increasingly use LLM-powered interfaces to simplify interactions across the entire product experience.

Modern Flutter applications are using LLMs for:

- AI chat assistants

- Intelligent customer support

- Personalized recommendations

- Workflow automation

- Voice-enabled interactions

- AI-generated summaries

- Smart search experiences

- Content generation

- Multilingual communication

This is especially valuable for SaaS, fintech, healthcare, ecommerce, and productivity applications where users interact with large amounts of information daily.

For enterprise mobility platforms, LLMs are reducing dashboard fatigue significantly. Employees can retrieve insights, generate reports, or automate repetitive operational tasks using conversational prompts instead of navigating multiple workflows manually.

This improves usability while also reducing onboarding complexity for new users.

However, businesses are also learning that successful LLM integration depends heavily on UX design quality.

Many AI mobile experiences still feel inefficient because they overload users with chatbot windows or generic AI assistants that provide inconsistent results.

Users do not necessarily want visible AI everywhere.

They want faster and easier interactions.

That difference is becoming critical in mobile product strategy.

The Biggest Challenges Are Performance, Privacy, and Trust

While LLM integration creates major opportunities, it also introduces new technical and operational challenges for businesses.

One of the biggest concerns is performance.

LLMs can increase latency, API costs, memory usage, and battery consumption if implemented poorly inside mobile ecosystems. Businesses increasingly need lightweight AI architectures that maintain responsive mobile experiences without overloading devices or infrastructure.

This is why many companies are exploring hybrid AI strategies where some processing happens on-device while larger AI tasks run through cloud infrastructure.

Privacy is another major issue.

LLMs depend heavily on user data and contextual inputs. Businesses integrating conversational AI into Flutter apps must now think carefully about data handling, consent management, enterprise compliance, and secure AI infrastructure.

This is especially important in industries like healthcare, finance, and enterprise SaaS where regulatory requirements are strict.

Trust is also becoming a defining factor.

Users quickly lose confidence in AI systems that generate inaccurate information, hallucinate responses, or misunderstand intent repeatedly. Businesses are realizing that conversational AI experiences require stronger validation systems, contextual memory handling, and explainable outputs to maintain reliability.

As a result, AI governance is becoming just as important as AI capability itself.

The Future of Flutter Apps Will Feel More Intelligent and Less Manual

The next generation of Flutter applications will likely rely far less on traditional mobile interaction patterns.

Users will increasingly expect apps to predict intent, automate repetitive actions, generate contextual responses, and simplify workflows naturally through AI-driven interactions.

This transition is already reshaping enterprise mobility, ecommerce platforms, healthcare apps, fintech systems, learning products, and SaaS ecosystems.

Businesses that continue treating LLMs as isolated chatbot features may struggle to create meaningful differentiation.

The companies likely to succeed will be the ones using LLMs to quietly reduce friction throughout the entire mobile experience.

That is why integrating LLMs into Flutter applications is becoming one of the most important conversations in modern mobile development today.

The opportunity is no longer just about adding AI to apps.

It is about redesigning how users interact with mobile products altogether.